arxiv: https://arxiv.org/pdf/2508.08997

Intrinsic Memory Agents: Heterogeneous Multi-Agent LLM Systems through Structured Contextual Memory

(Yuen et al., 2025)

────────────────────────────────────────

1. Executive Summary

Intrinsic Memory Agents (IMA) is a multi-agent framework that equips each Large Language Model (LLM) agent with its own role-aligned, structured “intrinsic memory.” By storing and updating agent-specific knowledge in a predefined JSON template, the system maintains long-term coherence, role fidelity and token efficiency—even when conversations exceed the context window. Benchmarks on structured-planning tasks (PDDL) show a 38.6 % performance improvement over prior memory architectures, and a real-world case study (cloud data-pipeline design) demonstrates higher quality outputs across five quality metrics.

────────────────────────────────────────

2. Main Themes

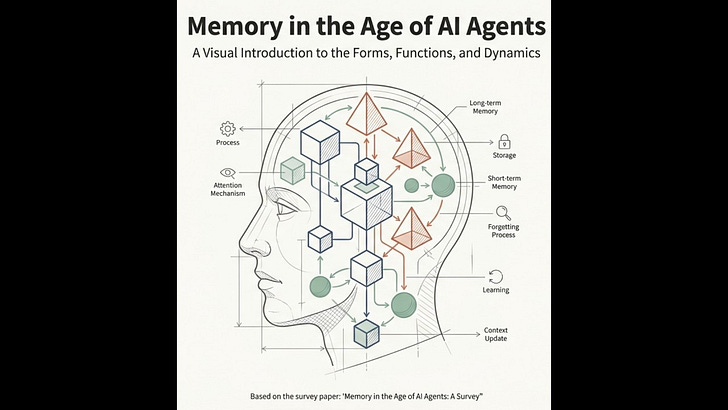

• Context-window limitations are a core bottleneck for multi-agent LLM collaboration.

• Existing memory/RAG solutions are largely single-agent and “homogeneous.”

• Introducing heterogeneous, role-specific memory boosts coordination, planning accuracy and design quality without adding more dialogue turns.

• Token efficiency—not just raw performance—matters for cost-effective enterprise use.

────────────────────────────────────────

3. Key Findings & Results

1. PDDL Benchmark (Numeric Planning)

• Average reward = 0.0833 (vs. 0.0601 for MetaGPT; 0.0231 no-memory).

• Best token efficiency: 5.93 × 10⁻⁷ reward per token.

2. Cloud Data-Pipeline Case Study (10 runs, Llama-3 8b)

Metric improvements over baseline Autogen (median scores):

– Scalability: +2 points (p = 0.0041)

– Reliability: +1.33 points (p = 0.005)

– Cost-effectiveness: +1.45 points (p = 0.004)

– Documentation: +1.56 points (p = 0.0017)

– Usability: +0.67 points (not statistically significant).

3. Overhead

• +32 % tokens vs. baseline, but no significant increase in conversation turns.

────────────────────────────────────────

4. Technical Capabilities

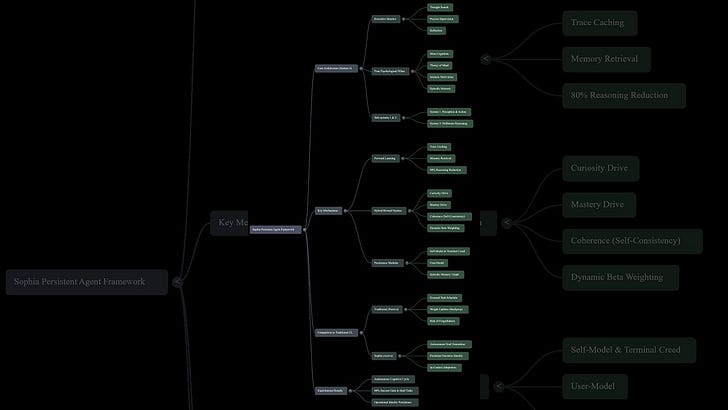

• Structured Memory Templates: Pre-declared JSON slots (e.g., domain_expertise, proposed_solution).

• Intrinsic Memory Update: Memory is rewritten directly from the agent’s latest output via a small prompt (Figure 2) instead of external summarizers.

• Context Construction Algorithm: Always includes (1) task description, (2) the agent’s memory, (3) the most recent turns—prioritizing memory if the window is tight.

• Heterogeneous Agents: Each agent sees a different working context, leading to divergent yet complementary reasoning paths.

• Implementation compatible with AutoGen; open-sourced (GitHub link in paper).

────────────────────────────────────────

5. Representative Quotes

• “The intrinsic nature of memory updates… ensures unique memories that maintain consistency with agent-specific reasoning patterns.”

• “Agentic memory methods provide better contextual integration than pure retrieval approaches, [but] lose critical details… Our approach introduces structured heterogeneous memory for each agent.”

• “Improvements translate to qualitative enhancements in solution quality without increasing the number of conversation turns.”

────────────────────────────────────────

6. Business & Enterprise Applications

1. Complex Solution Design

– Cloud-architecture planning, MLOps pipelines, ERP migrations—any task requiring multiple specialist viewpoints.

2. Long-Running Customer Support Agents

– Preserve per-agent expertise (billing vs. tech-support) over lengthy ticket histories.

3. Compliance & Audit Trails

– Structured memory provides an immediate, machine-readable log of decisions per role—useful for regulated industries.

4. AI-Driven Project Management

– Agents (PM, engineer, QA) keep their own memory, reducing drift in long product cycles.

5. Simulation & Training

– Large-scale role-play (urban planning, defense, economics) where agent heterogeneity is essential.

────────────────────────────────────────

7. Limitations & Challenges

• Memory templates are currently hand-crafted—limits portability.

• +32 % token cost; still requires budget considerations for production.

• Usability scores rose only modestly; richer justification prompts or fine-tuning may be needed.

• Evaluation across broader domains (creative writing, code-review) remains future work.

────────────────────────────────────────

8. Future Research Directions

• Auto-generation of memory templates from role descriptions.

• Fine-tuning individual agents to further exploit heterogeneity.

• Dynamic memory pruning/compaction to lower token overhead.

• Integration with external knowledge graphs for hybrid symbolic-LLM memories.

• Support for multi-modal memories (images, audio) to match multimodal LLMs.

────────────────────────────────────────

9. Data & Reproducibility

• Benchmarks: PDDL tasks via AgentBoard; Data-pipeline design prompts provided in Appendix A.

• Models: Llama-3 8B (PDDL) and Llama-3 3B (pipeline); GPUs: A100.

• Codebase: https://github.com/bingreeky/GMemory (baseline) + IMA repo (forthcoming).

────────────────────────────────────────

10. Takeaways for Decision Makers

• If your organisation relies on multi-agent LLM workflows, intrinsic, role-specific memories can materially improve quality with manageable cost.

• Structured memories also create transparent artefacts (JSON) that can plug into existing observability or compliance stacks.

• Start with high-value, well-structured planning tasks (e.g., infrastructure design) to capture quick ROI before expanding to unstructured domains.

────────────────────────────────────────

Created with AI