arxiv: https://arxiv.org/pdf/2511.03690

Listen in 22 languages, when available:

Executive summary

- What it is: A modular, production-grade SDK for software development agents that runs locally by default and scales to sandboxed, remote deployments without code changes.

- Why it matters: It addresses reliability, security, and deployment friction that hinder agent adoption, with event-sourced state, immutable configuration, and a clean separation between core, tools, workspaces, and server.

- Key gains: Optional sandboxing, deterministic replay, model-agnostic multi‑LLM routing, MCP-native tools, REST/WebSocket server, built‑in VS Code/VNC/Browser workspaces, and security analysis with confirmation controls.

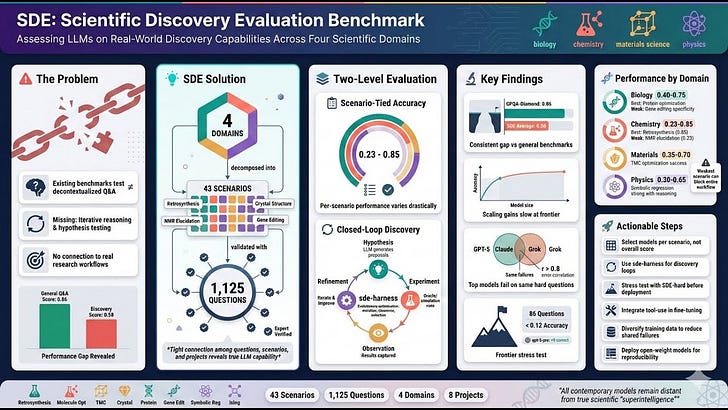

- Proof points: 64k+ GitHub stars (OpenHands ecosystem), strong results on SWE‑Bench Verified and GAIA, and low-cost CI that validates agent behavior across models in minutes.

Main themes

- From monolith to modular SDK: A full architectural redesign (V0 → V1) to separate concerns and improve reliability, extensibility, and deployment portability.

- Local-first, deploy-anywhere: Run the same agent locally or in containers/remote runtimes by swapping a workspace implementation.

- Deterministic, recoverable execution: Event-sourced state with immutable agent/config components and a single source of truth for conversation state.

- Vendor-agnostic intelligence: Use 100+ model providers via LiteLLM, including non-function-calling models, with routing, extended thinking, and reasoning support.

- End-to-end production path: Integrated server with APIs and interactive workspaces (VS Code Web, VNC desktop, Chromium) for human-in-the-loop control.

Key findings and capabilities

- Architecture and modularity

- Four packages: openhands.sdk (core), openhands.tools (tool implementations), openhands.workspace (local/remote sandboxes), openhands.agent_server (REST/WebSocket server).

- Two-layer composability: assemble deployment components; safely extend capability components (Agent, Tool, LLM, MCP) without modifying core.

- Strict separation of concerns: SDK is a shared library across CLI, GUI, and integrations; decoupled from benchmarks and apps.

- State and reliability

- Event-sourced state model: Immutable event log with deterministic replay, selective persistence, and recovery to last processed event.

- Stateless components: Agents, tools, and LLMs are immutable and validated at construction. ConversationState is the single mutable source of truth.

- Context window management: Condensers summarize history when needed; logs retain full fidelity. Default condenser can reduce API cost up to 2× without performance loss (as reported).

- Tooling and MCP integration

- Action–Execution–Observation pattern: Type-safe input validation (Pydantic), executor isolation, and structured observations for LLM consumption.

- MCP-native: Converts MCP tool schemas to SDK Action/Observation; MCP tools behave like native tools.

- Tool registry: Decouples specs from executors for cross-process/network execution and lazy, environment-specific instantiation.

- LLM abstraction and routing

- Supports 100+ providers (LiteLLM). Works with Chat Completions and OpenAI Responses APIs (including advanced reasoning/extended thinking fields).

- Non-native tool use: Enables function-calling for models that lack it via prompt/schema conversion and output parsing.

- Multi-LLM routing: RouterLLM selects models dynamically per message (e.g., route multimodal prompts to multimodal models).

- Deployment and workspaces

- Conversation factory: Same Conversation API returns LocalConversation (in-process) or RemoteConversation (agent server) depending on workspace.

- Minimal code change to containerize: Replace a local path with DockerWorkspace to run in an isolated container.

- Agent server: FastAPI-based REST/WebSocket service that reconstructs agent configs, executes locally in container, and streams events in real time.

- Official Docker images: Include API server, VS Code Web, VNC desktop, and Chromium browser; per-agent isolation and multi-tenancy ready.

- Security and governance

- SecurityAnalyzer + ConfirmationPolicy: Risk-rate actions (low/medium/high/unknown) and enforce approvals; WAITING_FOR_CONFIRMATION state with approve/reject.

- SecretRegistry: Per-session isolation, late binding, masking/redaction, optional encryption, and live rotation; safe propagation to tools (e.g., env vars in shell).

- Built-in security analyzer: LLM-based risk assessment and configurable threshold policy provided by default.

- Performance and QA

- Benchmarks (as reported in text):

- SWE-Bench Verified: Claude Sonnet 4.5 72.8%; Claude Sonnet 4 68.0%; GPT‑5 (reasoning=high) 68.8%; Qwen3 Coder 480B A35B 65.2%.

- GAIA (val): Claude Sonnet 4.5 67.9%; Claude Sonnet 4 57.6%; GPT‑5 (reasoning=high) 62.4%; Qwen3 Coder 480B A35B 41.2%.

- CI strategy:

- Programmatic tests: Mock LLM to validate logic and APIs quickly.

- LLM-based tests: Integration and example tests across real models; $0.5–$3 per full run, <5 minutes.

- On-demand benchmarks: $100–$1000, hours per run.

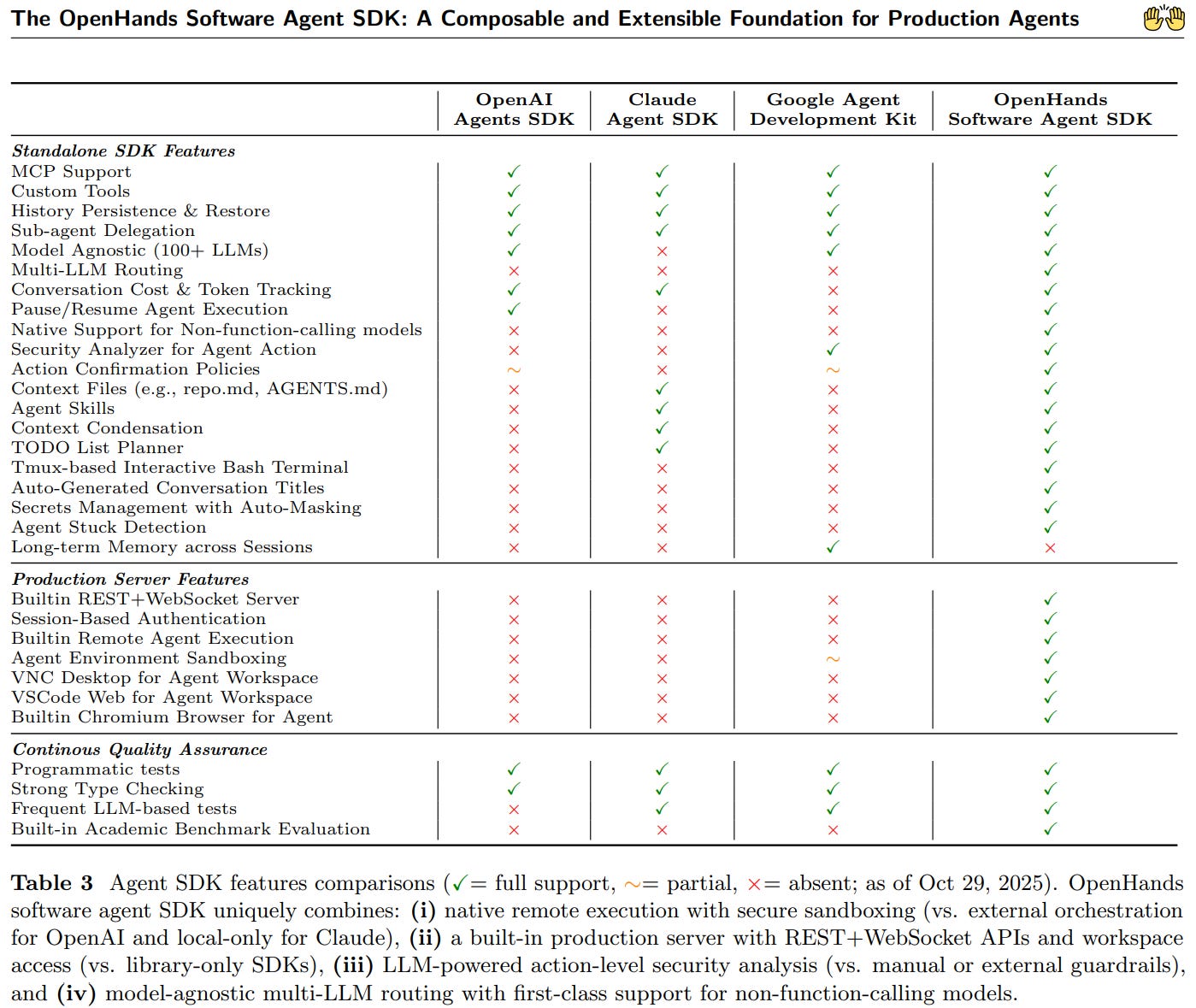

Unique differentiators vs provider SDKs (per text)

- Native sandboxed execution and isolation with first-party server and Docker images.

- Lifecycle control: pause/resume, sub-agent delegation, history restore, and deterministic replay.

- Model-agnostic multi-LLM routing across 100+ providers.

- Built-in security analyzer and confirmation policies.

- Integrated REST/WebSocket services and interactive workspace interfaces (VS Code Web, VNC, browser).

- QA instrumentation: unit tests, LLM-based integration tests, and benchmark harness.

Representative quotes from the text

- “Sandboxing should be opt-in, not universal.”

- “Stateless by default, one source of truth for state.”

- “Strict separation of concerns.”

- “Everything should be composable and safe to extend.”

- “The SDK defines an event-sourced state model with deterministic replay, an immutable configuration for agents, and a typed tool system with MCP integration.”

- “The same agent to run locally for prototyping or remotely in secure, containerized environments with minimal code changes.”

- “Compared with existing SDKs from OpenAI, Claude and Google, OpenHands uniquely integrates native sandboxed execution, lifecycle control, model-agnostic multi-LLM routing, and built-in security analysis.”

- “These elements allow the OpenHands Software Agent SDK to provide a practical foundation for prototyping, unlocking new classes of custom applications, and reliably deploying agents at scale.”

- “Two runs with identical parameters could still diverge subtly [in V0]; V1 treats all agents and components as immutable… The only mutable entity is the conversation state.”

- “This containerized design simplifies deployment and enables SaaS-style multi-tenancy while preserving workspace isolation.”

Business applications and value

- Software engineering automation

- Autonomous coding tasks, bug fixing (SWE-Bench class tasks), refactoring, codebase migration, and test generation.

- CI/CD agents that run gated actions in isolated environments with audit trails and human approval.

- Developer experience platforms

- Embedded agents in IDEs or web workspaces (VS Code Web/VNC/Browser) for long-running tasks and async assistance.

- Secure internal copilots with secret management and risk-based approvals.

- DevOps/SRE and IT automation

- Runbooks and incident response with controlled tool execution, pause/resume, and deterministic replay.

- Infra and environment orchestration via tools and MCP integrations.

- Governance, risk, and compliance

- Action-level risk ratings and approvals; session logs and reproducibility for audits.

- Secret rotation and masking to prevent leakage in logs and model context.

- SaaS and platform integrations

- Multi-tenant agent hosting with per-session isolation; offer agents as services behind APIs and WebSocket streams.

- Vendor-agnostic model routing to control cost/performance and diversify provider risk.

Adoption guide (quick start and extension points)

- Quick start

- Define LLM (via LiteLLM), get a default agent, create Conversation pointing at a local project path, send a message, run.

- To containerize, replace local path with DockerWorkspace; no other code changes required.

- Extend safely

- Tools: implement Action, ToolExecutor, Observation; register in tool registry; or import MCP tools seamlessly.

- LLM routing: subclass RouterLLM to select models dynamically.

- Policies: implement custom SecurityAnalyzer and ConfirmationPolicy.

- Context: add Skills (programmatic or markdown) and prompt prefixes/suffixes without changing agent logic.

- Delegation: spawn sub-agents via the delegation tool for parallel or hierarchical workflows.

Security and governance considerations

- Use opt-in sandboxing (DockerWorkspace) for untrusted or high-risk tasks; enforce per-conversation resource limits via container runtime.

- Require confirmations for medium/high/unknown risk actions; adjust thresholds by environment (dev vs prod).

- Manage secrets centrally via SecretRegistry integrations; enable rotation without restarting agents.

- Persist and encrypt state as required; use event logs for audits and deterministic replays.

Operational notes and prerequisites

- Core stack: Python, Pydantic models, FastAPI server, LiteLLM, Docker (for sandboxing).

- Performance/cost control: Condenser to manage context tokens; model routing to allocate expensive models only where needed; daily LLM-based CI costs are small.

- Scalability: One container per agent session for strong isolation; deploy behind a load balancer; leverage Kubernetes via container images if needed.

Risks and mitigations

- Model variability: Use deterministic replay and CI across multiple models to catch regressions; leverage routing to backstop failures.

- Security of actions: Enforce confirmation policies; run in containers; mask and encrypt secrets.

- State growth and cost: Use condensers; persist incrementally; prune artifacts as needed.

- Tool brittleness: Favor typed Action/Observation schemas; validate at boundaries; treat MCP tools as first-class but monitor server availability.

Key links and licensing

- SDK repository: https://github.com/OpenHands/software-agent-sdk

- Benchmarks: https://github.com/OpenHands/benchmarks

- License: MIT

Appendix: minimal workflow (conceptual)

- Local prototyping

- Instantiate LLM and default agent.

- Create Conversation(agent=..., workspace=”/path/to/project”).

- conversation.send_message(”Task…”); conversation.run().

- Remote/containerized

- Replace workspace path with DockerWorkspace(...).

- Keep agent, tools, and messaging code unchanged.

- Observe streamed events via WebSocket for UI/monitoring.

What’s new versus OpenHands V0

- Optional isolation instead of universal sandboxing; unified code path avoids duplicated local MCP/tool logic.

- Immutable config with a single mutable ConversationState; deterministic replay and reliable recovery.

- Modular SDK separated from apps and benchmarks; smaller, faster core; independent releases.

- Typed, extensible tool system with MCP parity; distributed execution via registry; safer and more composable orchestration.

Decision checklist

- Do you need vendor-agnostic models, MCP tools, and a path from local to isolated deployment?

- Will you require human-in-the-loop approvals, secret management, and auditable logs?

- Do you need to route across models for cost/performance or multimodality?

- Are repeatability and deterministic recovery mandatory for your workflows?

- Do you plan to expose agents via APIs and interactive workspaces?

Created with AI